Introduction: From Graphics Rendering to AI Compute, what are the best nvidia gpus for ai projects?

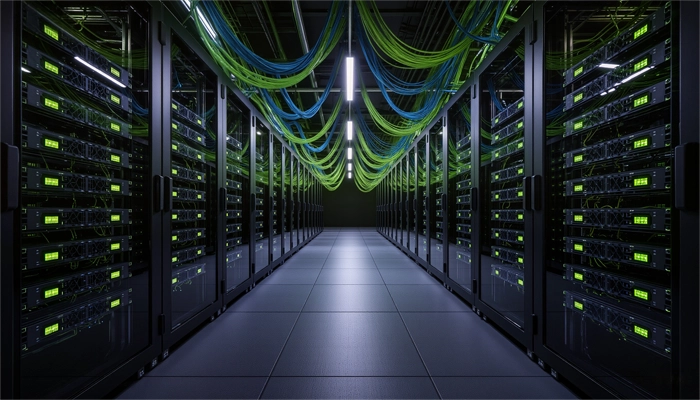

I. Why NVIDIA GPU Is Best of Choice for AI Projects

- 1. Leading Performance: Hardware like GB200, H200, and H100 are deeply integrated with drivers and frameworks to unleash peak compute power.

- 2. Full Compatibility: Official Docker images support major frameworks including TensorFlow, PyTorch, MXNet, and JAX, with synchronized updates that eliminate the need for manual compatibility tuning.

- 3. All-in-One Ecosystem: Prebuilt model repositories, training scripts, monitoring dashboards, and automated deployment tools allow developers to focus on algorithms rather than infrastructure.

II. Key Steps to Launch an AI Project on NVIDIA GPU: GB200, H200, and H100 Compared

- • GB200: NVIDIA's flagship combo, featuring two B200 GPUs paired with a Grace CPU. Designed for training trillion-parameter LLMs, it offers up to 384GB of HBM3e memory and 16TB/s bandwidth. Ideal for AI supercomputing clusters, typically used in enterprise or research environments due to its high resource density and power consumption.

- • H200: An upgrade to the H100, the H200 provides 141GB of HBM3e memory and 4.8TB/s bandwidth. Suitable for training and inference of medium to large-scale models, widely adopted in enterprise deployments.

- • H100: As the representative product of NVIDIA Hopper architecture, H100 is the main GPU for large model training and inference, which is widely used in enterprise and scientific research scenarios. Equipped with 80GB HBM3 memory, bandwidth of 3.35TB/s, support for Transformer Engine and FP8 precision optimization, it is one of the most versatile high-performance GPUs in current AI projects.

Developers should choose based on task type (training vs. inference), budget constraints, and deployment scale. Smart selection impacts not only performance but also resource efficiency and cost control.

III. Practical Tips to Maximize NVIDIA GPU Deployment Efficiency

- 1. Cost Optimization: Compared to using native cloud GPU resources, GPU rentals (e.g., hourly billing) offer better value for short-term projects, especially for model validation and small-scale training.

- 2. Performance Tuning: Efficient task allocation, memory optimization, and mixed-precision training can significantly improve GPU utilization.

- 3. Platform Tools Recommendation: For example, Canopy Wave provides Cloud APIs and GPU rental services that allow developers to quickly access NVIDIA GPUs. Its flexible billing system helps optimize resource usage. Canopy Wave's auto-scaling and cost monitoring features enable teams to expand during peak demand and release instances during idle periods, further reducing overall expenses.

IV. NVIDIA, AMD, and Google: A Comparative Analysis for AI Developers

| Dimension | NVIDIA GPU | AMD GPU | Google TPU |

| AI performance | Performance Tensor Cores support mixed-precision training, fast inference, optimized for large models | Lacks dedicated AI acceleration units; slower training and higher inference latency | Excellent performance for specific tasks; optimized for large-scale training but less versatile |

| Framework Compatibility | Native support for PyTorch, TensorFlow, JAX, MXNet, and other mainstream frameworks | Relies on ROCm; limited compatibility and less stable support for some frameworks | Primarily supports TensorFlow and JAX; limited PyTorch support |

| Toolchain Support | Mature ecosystem with CUDA, cuDNN, TensorRT, NCCL; continuously updated | ROCm toolchain still maturing; some features missing or require manual setup | Uses XLA compiler; requires model-specific adaptation |

| Deployment Flexibility | Deployable across local servers, private/public clouds, and containers | No unified container support: deployment is more complex | Deployment restricted to Google Cloud TPU VMs; less flexible |

| Community & Resources | Large developer community, extensive tutorials, active forums | Smaller community, scattered documentation, limited support | Community focused around TensorFlow; smaller overall ecosystem |

| Use Case Suitability | Ideal for training, inference, content generation, and AI agent deployment | Suitable for lightweight graphics tasks or entry-level AI experimentation | Best for large-scale model training, especially within Google Cloud infrastructure |

V. NVIDIA's Strengthening Strategic Position in the Era of Large Models

The GB200, built on the Blackwell architecture, combines two B200 GPUs with a Grace CPU, achieving higher compute density and energy efficiency within a compact footprint. It is purpose-built for training trillion-parameter models. With support for FP4/FP6 precision, it maintains model accuracy while significantly reducing compute costs and memory usage, especially suitable for generative AI and multimodal inference tasks. The HBM3e high-bandwidth memory supports up to 384GB and 16TB/s bandwidth, dramatically improving data throughput and solving memory bottlenecks in large-scale model training.

NVIDIA's software stack is a key differentiator. The CUDA platform provides a unified parallel computing interface, supporting major AI frameworks like PyTorch, TensorFlow, and JAX, lowering the barrier to entry for developers. TensorRT and the Triton inference server optimize model performance, enabling multi-model concurrent deployment and automated batching---ideal for enterprise-scale applications. MIG (Multi-Instance GPU) technology allows for resource isolation and multi-task parallelism, improving GPU utilization in cloud and multi-tenant environments.